By March 9, 2026, every RICS-regulated firm worldwide became legally obligated to govern its use of artificial intelligence — not as a best-practice suggestion, but as a mandatory professional standard. For party wall surveyors, this shift is particularly significant: a discipline already navigating the tightrope between two neighbours' competing interests must now also demonstrate that any AI-assisted defect diagnosis, schedule of condition, or award drafting meets strict ethical, transparency, and accountability requirements. Responsible AI in Party Wall Surveys: RICS Global Standard Compliance Strategies Post-March 2026 Launch is no longer a future-gazing topic — it is the operating reality for every practitioner picking up a party wall matter today.

This article unpacks what the RICS global AI standard demands, how it maps onto the specific workflows of party wall practice, and what surveyors must do right now to stay compliant and credible — including in expert witness contexts governed by CPR Part 35.

Key Takeaways 📌

- The RICS Responsible AI standard became mandatory on March 9, 2026, applying to all members and regulated firms globally. [1]

- Party wall surveyors must maintain written risk registers, reviewed at least quarterly, covering all AI tools used in service delivery. [4]

- Written client disclosure is required before using AI in any party wall service, including options to opt out. [1]

- AI outputs used in party wall awards or expert witness reports must be subject to professional scepticism and randomised quality sampling. [4]

- CPR Part 35 credibility depends on surveyors being able to demonstrate that AI tools were used as aids — not substitutes — for professional judgment.

What the RICS Global AI Standard Actually Requires

RICS published its first global professional standard for the responsible use of AI in surveying practice, with an effective date of March 9, 2026. [1] The standard is framed as a conduct standard — meaning it sets behavioural and governance expectations rather than prescribing specific technologies. [2] This is deliberate: the surveying profession spans dozens of jurisdictions and an enormous range of AI tools, from automated valuation models to machine-learning crack detection software.

For party wall surveyors, the key obligations break down into five core areas:

1. Risk Registers and Quarterly Reviews

Every RICS-regulated firm must develop and implement a written policy for responsible AI use, supported by a risk register. [4] That register must include:

| Risk Register Component | Description |

|---|---|

| Risk description | What the AI tool does and where it could fail |

| Likelihood assessment | How probable is a harmful outcome? |

| Impact projection | What are the consequences if it goes wrong? |

| Mitigation plan | Steps taken to reduce likelihood or impact |

| Risk appetite definition | What level of risk is acceptable? |

| RAG rating | Red/Amber/Green status for each risk |

💡 Pull Quote: "Risk registers are not a one-time exercise. The standard mandates review and update at minimum every three months." [4]

This quarterly cadence matters enormously for party wall practice, where AI tools are evolving rapidly. A crack-analysis app that earned a Green RAG rating in January 2026 may warrant reassessment by April if the vendor updates its underlying model.

2. System Governance Assessments Before Deployment

Before using any AI system to deliver a party wall service, firms must conduct and record in writing a system governance assessment covering: [4]

- The specific AI application being used

- Potential risks and benefits

- Alternative approaches considered

This applies whether the tool is off-the-shelf software or a proprietary system developed in-house. For surveyors using AI to analyse photographic evidence of wall damage or to draft standard party wall notices, this means creating a documented rationale before the tool enters the workflow — not after a dispute arises.

3. Written Client Disclosure

Clients — both building owners and adjoining owners — must be informed in writing of when and how AI will be used in delivering party wall services. [1] The disclosure must also explain options for redress or opting out. This is a significant shift in client care obligations. A standard party wall surveyor appointment letter now needs an AI use clause, clearly worded and acknowledged by the client.

4. Quality Assurance Through Randomised Sampling

Even where AI is used at high volume or in automated workflows, firms must undertake randomised dip samples of AI outputs at regular intervals. [4] For a busy party wall practice processing multiple schedules of condition per week using AI-assisted photography tools, this means a documented sampling protocol — not just an informal sense that "the outputs look right."

5. Professional Judgment Remains Non-Delegable

The standard is unambiguous: surveyors must assess the reliability of AI outputs and maintain full accountability for all work. [2] AI is a tool. The professional judgment, the expertise, and the liability remain with the surveyor.

Applying Responsible AI in Party Wall Surveys: RICS Global Standard Compliance Strategies Post-March 2026 Launch to Everyday Practice

Understanding the standard in the abstract is one thing. Mapping it onto the specific tasks of a party wall surveyor is where compliance becomes practical. Below is a workflow framework for integrating AI tools responsibly across the key stages of a party wall matter.

Stage 1: Pre-Notice and Initial Assessment

Many surveyors now use AI-assisted tools to review building plans, identify notifiable works, and assess proximity to boundaries. These tools can flag potential issues with party wall loft conversions or works involving insulation in a party wall that a less experienced eye might miss.

Compliance checklist at this stage:

- ✅ System governance assessment completed and filed

- ✅ AI tool included in the firm's risk register with current RAG rating

- ✅ Client appointment letter includes AI disclosure clause

- ✅ Surveyor has independently verified AI-flagged issues against plans

A common failure point is relying on AI to determine whether a party wall notice is required at all. If the AI tool misclassifies works as non-notifiable and a party wall notice is not served when it should be, the surveyor — not the software vendor — carries the professional liability.

Stage 2: Schedule of Condition

The schedule of condition is perhaps the area where AI tools offer the greatest efficiency gains — and the greatest compliance risk. AI-powered photographic analysis can process hundreds of images quickly, flagging cracks, damp, and structural anomalies. For complex works, this can be genuinely valuable, particularly when combined with drone surveys for difficult-to-access elevations.

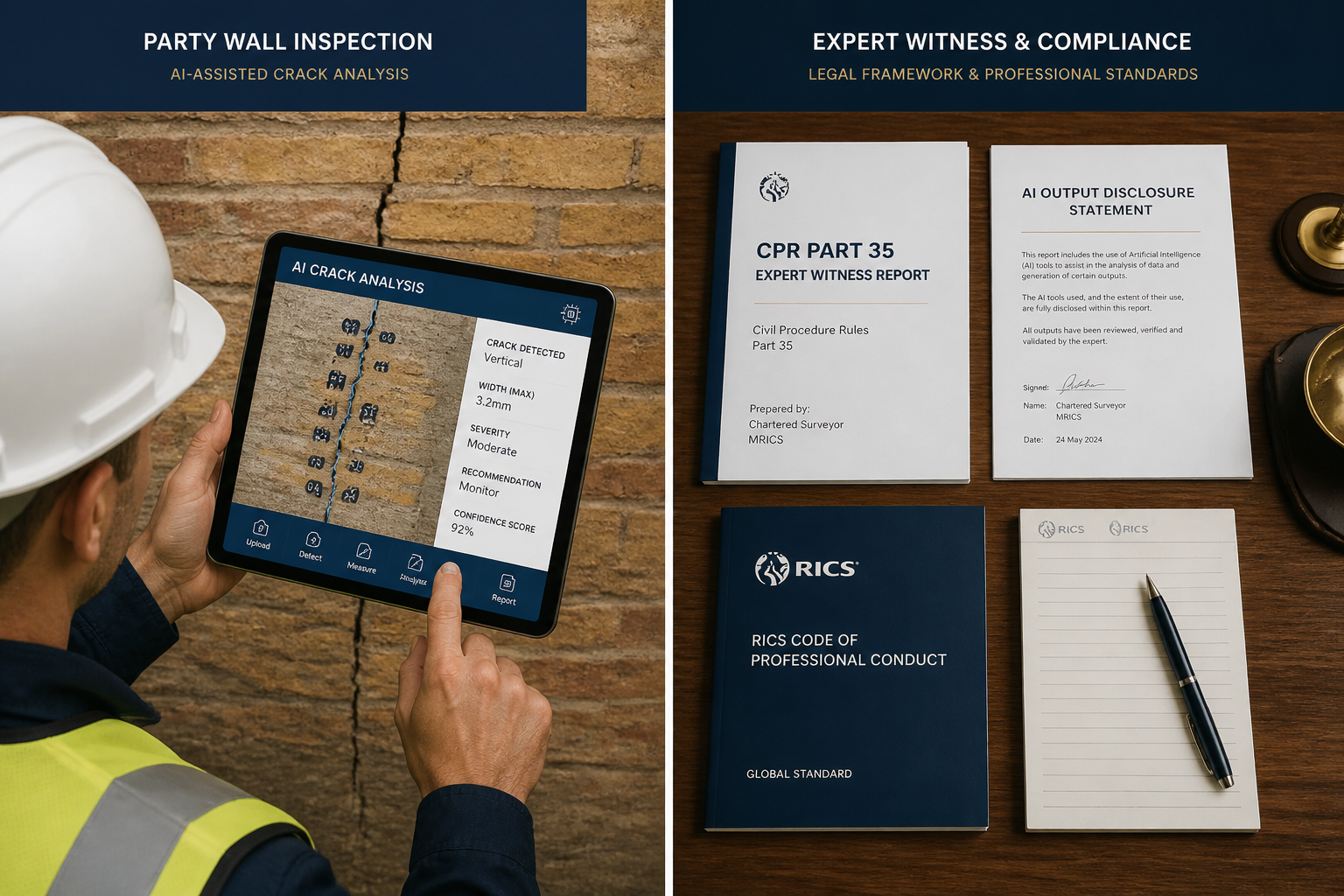

However, the RICS standard requires that AI outputs be subject to professional scrutiny. A surveyor who submits a schedule of condition where the defect descriptions were generated entirely by AI — without independent verification — is in breach of the standard. [2]

Practical workflow:

- Use AI tool to generate an initial defect log from site photographs

- Surveyor reviews every AI-flagged item on site or from raw images

- Surveyor adds, removes, or amends entries based on professional judgment

- Final schedule is signed off as the surveyor's own professional product

- AI tool's contribution is noted in the internal file, not necessarily in the client-facing document (unless disclosure requires it)

Stage 3: Award Drafting

AI drafting tools — including large language models — are increasingly used to generate first drafts of party wall awards. This is permissible under the RICS standard, provided the surveyor exercises professional judgment over the final output. [1]

Key risks in award drafting include:

- Hallucinated legal references: AI tools sometimes cite legislation incorrectly or invent case law

- Jurisdiction errors: Standard clauses may not reflect local planning conditions

- Outdated precedents: AI trained on older data may miss recent amendments to the Party Wall etc. Act 1996

⚠️ Critical point: Every clause in a party wall award must be reviewed and verified by the surveyor. The award is a legal document. An AI-generated error that causes a dispute to escalate is a professional conduct matter, not a software bug.

Stage 4: Dispute Resolution and Expert Witness Work

This is where responsible AI in party wall surveys intersects most directly with CPR Part 35 obligations. Where a party wall dispute reaches the courts and a surveyor is appointed as an expert witness, the expert's duty is to the court — not to the party who instructed them.

CPR Part 35 requires that expert reports are the genuine, independent opinion of the expert. If AI tools were used in forming that opinion, the expert must be able to:

- Explain what the AI tool did

- Demonstrate that they independently verified the AI's outputs

- Confirm that the opinion expressed is their own professional judgment

The RICS standard's requirement for professional scepticism and accountability [2] directly supports CPR Part 35 compliance. Surveyors who can point to a documented workflow — risk register, system governance assessment, sampling records — are in a far stronger position if their use of AI is challenged in cross-examination.

For matters involving structural complexity, pairing AI analysis with a structural survey or specialist monitoring surveys provides an additional layer of defensible evidence.

Building a Compliant AI Governance Framework: Practical Strategies Post-March 2026

Responsible AI in Party Wall Surveys: RICS Global Standard Compliance Strategies Post-March 2026 Launch requires more than individual surveyor awareness — it demands firm-level governance infrastructure. Here is how to build it.

Establish a Firm AI Policy Document

Every RICS-regulated firm needs a written AI use policy. This document should cover:

- Which AI tools are approved for use in party wall matters

- The system governance assessment process for new tools

- Client disclosure templates

- The sampling protocol for quality assurance

- The escalation process when AI outputs are uncertain or contradictory

- How the risk register is maintained and reviewed

Implement a Quarterly Risk Register Review Cycle

The mandatory quarterly review [4] should be built into the firm's calendar as a standing agenda item. Each review should:

- Reassess RAG ratings for all active AI tools

- Document any new AI tools introduced since the last review

- Record any quality sampling findings

- Note any changes in relevant legislation (including EU Regulation 2024/1689 for firms operating in applicable jurisdictions) [4]

Train All Staff — Not Just Senior Surveyors

The RICS standard applies to all staff at regulated firms, not just partners or directors. Junior surveyors and support staff who interact with AI tools need to understand:

- The firm's AI use policy

- How to flag concerns about AI outputs

- The importance of never presenting AI-generated content as their own unverified work

Data Quality and Legal Compliance

Where firms use or develop AI systems that process personal data — for example, photographic schedules of condition containing images of a neighbour's property — the standard requires documented data quality policies and written legal compliance assessments. [4] Written permissions must be obtained before using individuals' personal data in AI systems.

For party wall surveyors, this has practical implications for how site photographs are stored, processed, and shared with AI tools. A photograph of an adjoining owner's bedroom wall is personal data. Processing it through a cloud-based AI analysis tool without appropriate legal basis and disclosure is a compliance risk under both the RICS standard and applicable data protection law.

Jurisdiction-Specific Considerations

UK-based party wall surveyors primarily operate under the Party Wall etc. Act 1996 and RICS professional standards. However, firms with international operations or those using AI tools developed in the EU must also consider EU Regulation 2024/1689. [4] Where conflicts arise between RICS standards and local legislation, the standard requires these to be recorded and reported to RICS. [4]

The Ethical Dimension: Why Responsible AI Matters for Neighbour Disputes

Party wall matters are, at their core, disputes between neighbours. The stakes are personal as well as financial. An AI tool that incorrectly diagnoses pre-existing damage as caused by notifiable works — or vice versa — can inflame a dispute, damage a professional relationship, and expose a surveyor to a negligence claim.

The RICS standard's emphasis on ethical, transparent, and professionally-overseen AI use [1] is not bureaucratic box-ticking. It reflects a genuine recognition that AI tools can be wrong, biased, or simply inappropriate for a given context. A surveyor who uses AI responsibly — with documented governance, client disclosure, and professional oversight — is not just compliant. They are better protected, more credible, and more likely to reach fair outcomes for the parties they serve.

For those seeking to understand the full scope of party wall surveying services or looking for guidance on choosing the right property survey in the context of a construction project, the integration of responsible AI practices is now a quality marker worth asking about when appointing a surveyor.

Conclusion: Actionable Next Steps for Party Wall Surveyors in 2026

The RICS global AI standard is not a distant regulatory horizon — it is the present-day framework within which every party wall surveyor must operate. The good news is that compliance is achievable with structured effort. Here are the immediate steps every firm should take:

- Audit current AI tool use — identify every AI application used in party wall matters, from drafting tools to photographic analysis software

- Create or update the firm's written AI use policy — include client disclosure templates and sampling protocols

- Build the risk register — complete RAG ratings for all tools and schedule the first quarterly review

- Update client appointment letters — add AI disclosure clauses before the next instruction is accepted

- Train all staff — ensure everyone understands the policy and their obligations under the RICS standard

- Document everything — system governance assessments, sampling records, and risk register reviews must be retained as part of the professional file

- Review data handling practices — ensure AI tools processing site photographs or personal data have appropriate legal basis and disclosure

The integration of AI into party wall surveying is not a threat to professional judgment — it is an opportunity to work more efficiently, more consistently, and with better-evidenced outcomes. But that opportunity is only realised when AI is used responsibly, with the governance infrastructure the RICS standard now demands. [1][2]

References

[1] RICS Launches Landmark Global Standard On Responsible Use Of AI In Surveying – https://www.rics.org/news-insights/rics-launches-landmark-global-standard-on-responsible-use-of-ai-in-surveying

[2] AI Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[3] Expert Witness Protocols For AI Generated Evidence Challenges In Valuation Disputes RICS March 2026 Standards Compliance – https://nottinghillsurveyors.com/blog/expert-witness-protocols-for-ai-generated-evidence-challenges-in-valuation-disputes-rics-march-2026-standards-compliance

[4] Responsible Use Of Artificial Intelligence In Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf