On 9 March 2026, a deadline passed that every RICS member and regulated firm worldwide was required to meet: full mandatory compliance with the world's first professional standard governing the responsible use of artificial intelligence in surveying. No grace period. No opt-out. Just a clear, enforceable expectation that AI tools used in surveying services must now operate within a defined ethical and governance framework [4].

For many firms, this milestone arrived faster than anticipated. For those still building their compliance roadmap, the clock is already running. This guide to AI in Surveying Practice: RICS Professional Standard Implementation Guide for 2026 breaks down what the standard requires, how to implement it practically, and how AI can genuinely enhance surveying services — without eroding the professional trust clients depend on.

Key Takeaways 📌

- Mandatory from March 9, 2026: The RICS AI standard is now enforceable for all members and regulated firms globally [4].

- AI supports, never replaces: Professional judgement remains the surveyor's responsibility — AI is a tool, not a decision-maker [3].

- "Material impact" is the trigger: The standard applies when AI has a material impact on service delivery, requiring careful assessment [2].

- Written policies are non-negotiable: All regulated firms using AI must produce a formal responsible AI use policy backed by a risk register [1].

- Governance steps must be documented: Pre-deployment assessments, system reviews, and accountability records must be kept in writing [5].

Understanding the RICS AI Standard: What Changed in 2026

The Standard's Origins and Scope

The RICS Responsible Use of Artificial Intelligence in Surveying Practice standard was published in September 2025 and came into mandatory global effect on 9 March 2026 [5][6]. It applies to all RICS members and regulated firms — regardless of firm size, geography, or specialism.

The standard does not ban AI. Far from it. It sets a framework for responsible adoption, recognising that AI tools are already embedded in many surveying workflows: from automated valuation models (AVMs) and drone-assisted roof inspections to machine-learning platforms that flag structural anomalies in building surveys.

💬 "The standard's foundational principle establishes that artificial intelligence should support, but never replace, professional judgement in surveying services." [3]

This is the cornerstone of everything that follows. Whether a firm is using AI to assist with a RICS Home Survey or to streamline a schedule of dilapidations, the surveyor remains the accountable professional.

What Counts as "Material Impact"?

Not every digital tool triggers the standard. The applicability threshold is whether an AI system has a "material impact" on the delivery of surveying services [2]. This is a nuanced test that requires informed professional judgement — and the final authority on disputed cases rests with the RICS Regulatory Tribunal [2].

Practical examples of material impact:

| AI Use Case | Material Impact? | Rationale |

|---|---|---|

| Automated Valuation Model (AVM) informing a Red Book valuation | ✅ Yes | Directly influences client-facing output |

| Spell-check software in report writing | ❌ No | No bearing on professional conclusions |

| AI-powered thermal imaging analysis in building surveys | ✅ Yes | Shapes defect identification and advice |

| Generic scheduling software | ❌ No | Administrative function only |

| AI risk-scoring tool for dilapidations | ✅ Yes | Affects liability assessment |

When in doubt, the standard recommends erring on the side of applying the framework. Documenting that assessment — even when the conclusion is "not applicable" — is itself good governance practice [1].

Implementing the Standard: A Practical Compliance Framework

Step 1: Develop a Responsible AI Use Policy

Every RICS-regulated firm that uses or intends to use AI systems must produce a formal Responsible AI Use Policy [1][4]. This is not optional. The policy must be:

- Informed by a risk register covering data governance, system reliability, and accountability

- Reviewed regularly as AI tools and firm practices evolve

- Accessible to all relevant staff who interact with AI systems

The risk register is particularly important. It should capture which AI systems are in use, what data they process, who is accountable for each system, and what safeguards are in place if a system produces unreliable outputs.

🔑 Key policy components to include:

- Scope statement — which AI tools are covered

- Accountability structure — named responsible individuals

- Data governance rules — how client data is handled by AI systems

- Quality assurance process — how AI outputs are verified

- Incident reporting — what happens when AI produces an error

- Review schedule — how often the policy is updated

Step 2: Conduct Pre-Deployment Governance Assessments

Before deploying any AI system with material impact, firms must conduct and record in writing a series of mandatory governance assessment steps [5]. These include evaluating:

- The reliability and accuracy of the AI system's outputs

- The data inputs the system relies on and their quality

- Bias risks — whether the system may produce unfair or skewed results

- Transparency — whether the system's reasoning can be explained to clients

- Regulatory alignment — whether use of the system complies with broader legal obligations (e.g., data protection law)

This assessment must be documented. Verbal sign-offs or informal approvals are insufficient under the standard.

Step 3: Maintain Human Oversight at Every Stage

The standard is unambiguous: AI outputs must be reviewed by a qualified professional before they influence any client-facing conclusion [3]. This applies whether the AI is assisting with a building survey, a homebuyer survey, or a party wall assessment.

Human oversight is not a bureaucratic formality — it is the mechanism by which professional liability is properly maintained. If an AI tool flags a structural issue, a chartered surveyor must independently assess that finding before it appears in a report. The AI is a prompt, not a verdict.

AI in Surveying Practice: RICS Professional Standard Implementation Guide for 2026 — Sector-Specific Applications

Valuations: Navigating Automated Models Responsibly

Automated Valuation Models (AVMs) have become increasingly common in residential and commercial property markets. Under the RICS AI standard, their use in formal valuations requires careful governance. A surveyor using an AVM to support a Red Book valuation must:

- Document the AVM's methodology and data sources

- Cross-reference outputs against comparable evidence gathered independently

- Clearly disclose to clients when AI tools have contributed to a valuation

- Retain full professional accountability for the final figure

The standard does not prohibit AVM use — it requires that surveyors understand, interrogate, and verify what the model produces. A valuation that simply adopts an AVM figure without professional scrutiny would represent a compliance failure.

For specialist valuations — such as insurance reinstatement valuations or probate valuations — the stakes of AI error are particularly high. Governance documentation becomes even more critical in these contexts.

Building Surveys: AI-Enhanced Defect Detection

AI tools are increasingly capable of analysing thermal imaging, drone footage, and photographic data to identify defects that might be missed in a standard visual inspection. When used in a Level 3 building survey, these tools can add genuine value — but only when properly governed.

Best practice for AI-assisted building surveys:

- ✅ Use AI analysis as a supplementary layer, not a primary inspection method

- ✅ Ensure the surveyor physically inspects areas flagged by AI tools

- ✅ Document which AI systems contributed to the survey process

- ✅ Explain AI involvement to clients in plain language

- ✅ Verify that AI tools have been assessed under the firm's pre-deployment governance process

The RICS building survey remains a professional service underpinned by qualified expertise. AI enhances the surveyor's capability — it does not substitute for it.

Dilapidations and Commercial Work

In commercial surveying, AI tools are being used to model dilapidations liability, analyse lease terms, and estimate reinstatement costs. For firms offering dilapidation surveys, the standard requires that any AI-generated liability assessment is reviewed by a qualified surveyor before being presented to a client or opposing party.

The adversarial nature of dilapidations disputes makes this particularly important. An AI-generated schedule that contains errors — whether through biased training data or incomplete inputs — could expose a firm to significant professional liability if not properly reviewed.

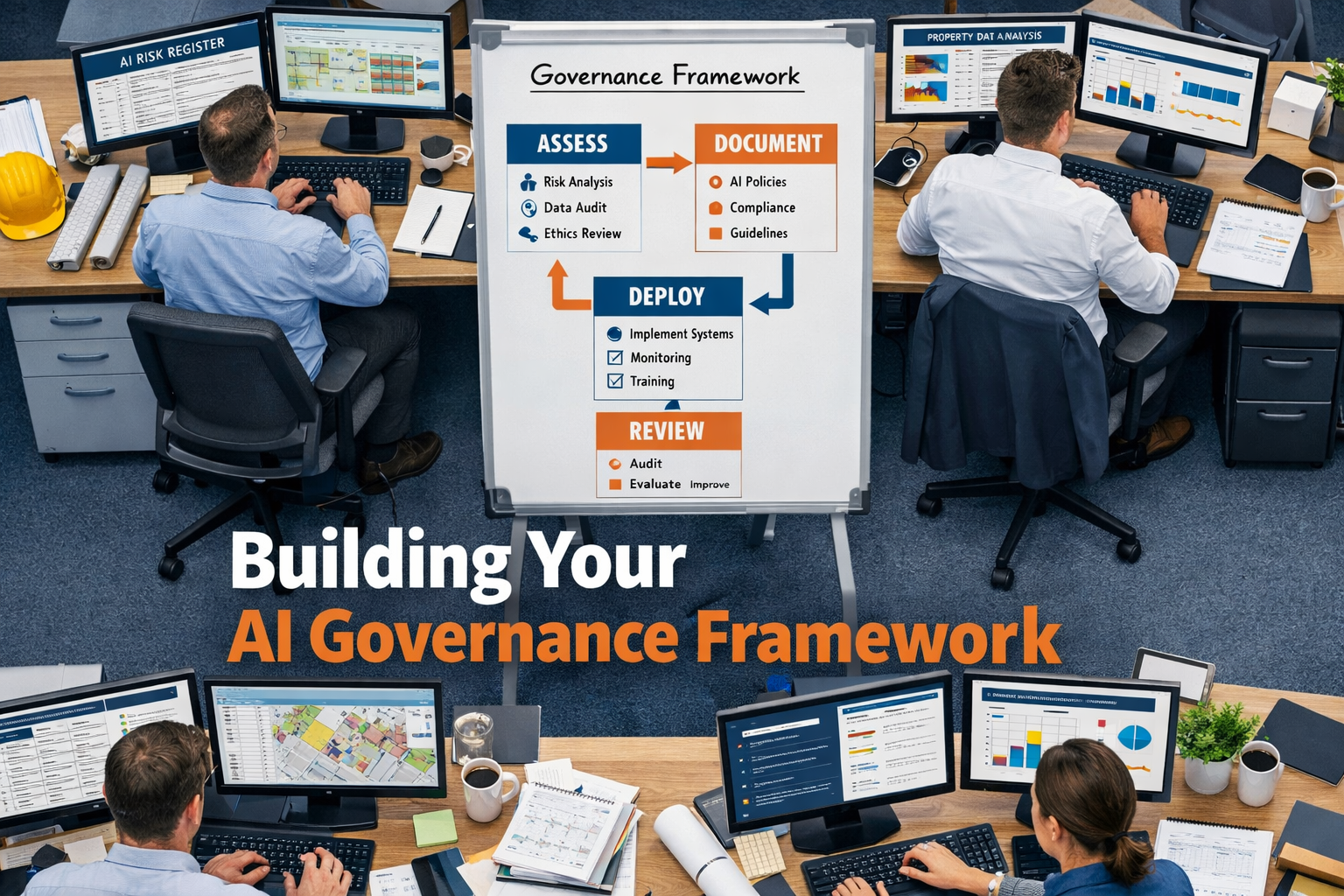

Building Your AI Governance Framework: Practical Steps for Firms

The Five Pillars of Compliant AI Use

Firms looking to implement the AI in Surveying Practice: RICS Professional Standard Implementation Guide for 2026 requirements should structure their governance around five core pillars:

1. 🗂️ Accountability

Designate a named individual (or team) responsible for AI governance. In smaller firms, this may be a senior partner. In larger organisations, a dedicated AI governance role may be appropriate.

2. 📊 Transparency

Maintain clear records of which AI systems are in use, what they do, and how their outputs are used. Clients should be informed when AI has materially contributed to a service.

3. 🔍 Accuracy and Reliability

Regularly test AI systems against known benchmarks. If a system's accuracy degrades — due to changing market conditions or data drift — it must be reassessed before continued use.

4. 🛡️ Data Protection

AI systems often process sensitive client data. Firms must ensure that data handling complies with UK GDPR and that client data is not used to train third-party AI models without explicit consent.

5. 🔄 Continuous Review

The AI landscape is evolving rapidly. Governance frameworks must be living documents — reviewed at least annually, or whenever a significant new AI tool is adopted.

Common Compliance Pitfalls to Avoid

| Pitfall | Why It's a Problem | Solution |

|---|---|---|

| Using AI without a written policy | Direct breach of the standard | Draft and implement a policy immediately |

| Adopting AI outputs without review | Undermines professional accountability | Mandate human sign-off on all AI outputs |

| Failing to disclose AI use to clients | Erodes trust; potential regulatory issue | Build disclosure into standard client communications |

| Ignoring data governance | GDPR exposure; AI accuracy risks | Include data rules in the risk register |

| Treating the policy as a one-off task | Standards and tools evolve | Schedule regular policy reviews |

Training and Competency Requirements

The standard implicitly requires that surveyors using AI tools are competent to do so [5]. This means understanding:

- What the AI system does and does not do

- The limitations of its outputs

- How to identify when an AI output may be unreliable

- How to explain AI involvement to clients in accessible terms

RICS CPD requirements already mandate ongoing competency development. Firms should incorporate AI literacy into their training programmes — not as a technical deep-dive, but as a practical understanding of the tools being used in day-to-day practice.

Why This Standard Matters for Client Trust

The introduction of the RICS AI standard is not simply a compliance exercise. It reflects a broader recognition that client trust is the foundation of the surveying profession — and that AI, if used carelessly, has the potential to undermine it.

Clients commissioning a homebuyer survey or a structural survey are making decisions worth tens or hundreds of thousands of pounds. They need to know that the advice they receive reflects genuine professional expertise — not an unverified algorithm.

The standard's requirement for transparency, human oversight, and documented governance is designed precisely to protect that trust. Firms that embrace it are not just ticking a regulatory box — they are demonstrating a commitment to professional integrity that differentiates them in an increasingly competitive market.

💬 "The first global AI standard for surveyors signals that responsible innovation and professional accountability are not in conflict — they are complementary." [6]

Conclusion: Actionable Next Steps for Surveyors in 2026

The RICS AI standard is now in force. For firms that have not yet completed their compliance journey, the following steps represent the clearest path forward:

Immediate Actions (Within 30 Days)

- Audit current AI use — identify every AI system in use across the firm and assess whether it has material impact on service delivery

- Draft a Responsible AI Use Policy — even a basic initial version is better than none; refine it as understanding develops

- Create a risk register — document each AI system, its data sources, its risks, and the controls in place

Short-Term Actions (Within 90 Days)

- Conduct pre-deployment assessments for any AI tools not yet formally evaluated

- Train staff on the standard's requirements and the firm's internal policy

- Review client communication templates to include appropriate AI disclosure language

Ongoing Actions

- Review the policy annually — or whenever a new AI tool is adopted

- Monitor RICS guidance updates — the standard will evolve as AI technology develops

- Engage with RICS resources — including the RICS Construction Journal guidance on responsible AI use

The profession's relationship with AI is still being defined. Surveyors who engage with that process thoughtfully — guided by the RICS standard and a genuine commitment to client service — will be best placed to benefit from AI's potential while maintaining the professional trust that sets chartered surveyors apart.

References

[1] AI Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[2] Responsible Use of AI – https://www.rics.org/profession-standards/rics-standards-and-guidance/conduct-competence/responsible-use-of-ai

[3] RICS Introduces Global Standard for the Responsible Use of AI in Surveying – https://www.eddisons.com/news/rics-introduces-global-standard-for-the-responsible-use-of-ai-in-surveying

[4] RICS Brings First Global AI Standard for Surveyors into Effect – https://www.associationexecutives.org/resource/rics-brings-first-global-ai-standard-for-surveyors-into-effect.html

[5] Responsible Use of Artificial Intelligence in Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf

[6] RICS First Ever Standard on Responsible AI Use Now in Effect – https://www.rics.org/news-insights/rics-first-ever-standard-on-responsible-ai-use-now-in-effect